Claude Code Pricing: Subscriptions vs API, Token Visibility, and the Models That Actually Work

Claude subscriptions are 15-30x cheaper than the API. Full cost breakdown of Claude Code plans, OpenRouter setup, best API models for agentic coding, and an open-source token visibility dashboard.

Claude subscriptions are 15-30x cheaper than the API. But Anthropic just killed every third-party tool that used them — and you still can’t see where your tokens go.

I run Claude Code on a Max plan. 440 sessions last month, 18,000 turns. I built a dashboard to track what that actually costs: $1,588 in API-equivalent tokens. Covered by a $200 subscription.

Here’s the full breakdown — what April 4 killed, which API models actually work for agentic coding, and how to see exactly where your budget goes.

What You’ll Learn

What April 4 actually killed — which tools lost subscription access and why Anthropic pulled the plug

The full cost landscape — subscriptions and API models in one table, ranked by the metric that actually predicts agentic performance

Why SWE-bench is misleading — it didn’t match my experience. Agentic Index did.

OpenRouter setup: two environment variables, 400+ models, done

Token visibility: an open-source dashboard that shows exactly where your Claude Code tokens go

1. The April 4 Policy Change: What Died, What Lives

April 4, 2026: Anthropic announced Claude subscriptions no longer work with third-party tools. If you were using Cline, Cursor, Windsurf, OpenClaw, or any non-Anthropic harness through your subscription — it stopped working.

What Still Works with Subscriptions

Claude Code CLI — Anthropic’s official agentic coding tool

Claude Code extension for VS Code — same mechanism

Claude.ai — web and mobile interface, including agentic Code sessions

Cowork — user-friendly agent inside Claude Desktop

Dispatch — the orchestration layer for Cowork and code

What Died

Cline — popular VS Code agent, relied on subscription auth

Cursor — AI-native editor, subscription routing cut off

Windsurf — Codeium’s editor, same story

OpenClaw — open-source Claude Code alternative with 135,000+ instances

Custom agents and automation — anything routing through subscription billing

For automation workflows (n8n, OpenClaw, custom agents): You’re on API billing now. The next section shows you exactly what that costs — and why it might actually be cheaper than you think.

—

OpenClaw footnote: A community workaround briefly routed OpenClaw through Claude Code’s MCP bridge. Anthropic closed it — one exact substring match to block OpenClaw’s system prompt.

2. The Full Cost Landscape

Many builders and PMs either overpay for a subscription they don’t fully use, or overpay for API models because they default to Sonnet. Here’s everything in one place.

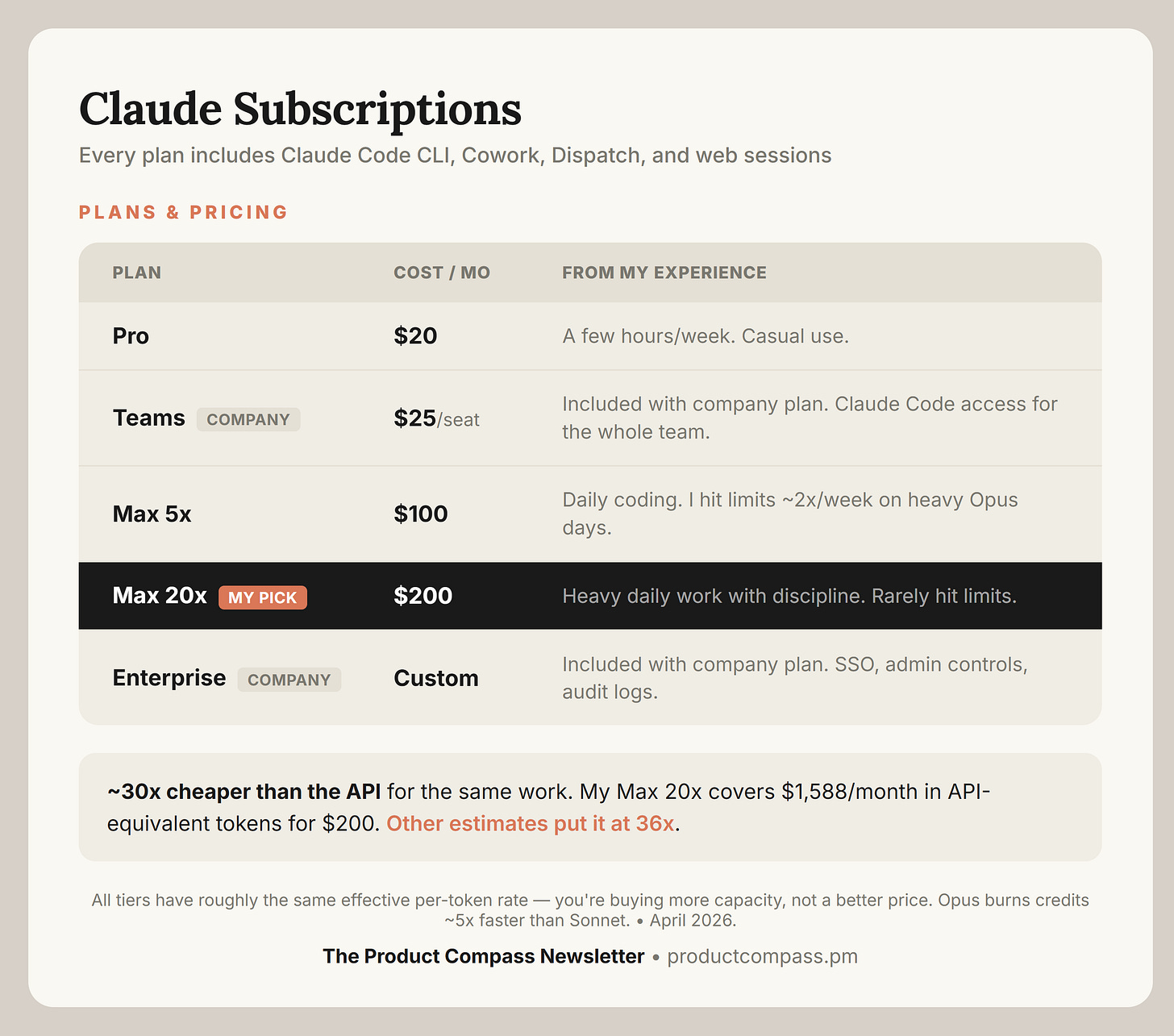

Claude Subscriptions: Flat Cost, Predictable

Every tier requires discipline. Opus burns credits ~5x faster than Sonnet, so use Opus for hard problems and switch to Sonnet for straightforward tasks. Run /compact regularly. Without this, you’ll hit limits on any plan — including Max 20x.

How cheap is this compared to API? My Max 20x costs $200/month. The dashboard I built (Section 4) shows $1,588 in API-equivalent costs for Claude Code alone — and I use Code and Cowork roughly 50:50, so the real API bill would be far higher. The Claude subscription runs roughly 15-30x cheaper than paying per token for the same work.

Teams and Enterprise subscribers: If your company already provides Claude Teams or Enterprise, you have Claude Code access included. Check with your admin — you may not need a personal subscription at all.

API: Pay Per Token, Choose Your Model

OpenRouter gives you access to 400+ models. Most aren't worth your time for agentic coding. I tested dozens — the table below is what survived real multi-step Claude Code sessions, ranked by Agentic Index:

Why Agentic Index?

SWE-Bench (Verified and Pro) tests isolated bug fixes — one issue, one patch, no iteration. It didn't match my experience.

Agentic Index (from Aider's polyglot benchmark) measures what Claude Code actually does: read files, plan changes, apply diffs, run tools, recover from errors, iterate. It matched what I saw in practice.

Claude Subscription vs API

Subscription wins if you code with Claude Code daily. Opus is the best agentic model — and cost per token isn’t cost per correct token. A cheaper model that needs many iterations to fix its own errors costs more than Opus getting it right the first time. Cowork, Dispatch, and web-based Claude Code sessions also require a subscription.

API wins if your usage is moderate, spiky, or you’re running automation workflows. Opus and Sonnet via API are hard to justify when the subscription gives you flat-rate access — and budget models match or beat Sonnet’s agentic performance:

GLM-5.1 — matches Opus 4.6 on agentic performance (67.0 vs 67.6) at 1/12x the input cost. Default for automation.

GLM-5 — same family, cheaper. Matches Sonnet’s 4.6 agentic score at 1/4x the cost.

MiniMax M2.7 — when cost matters most. 1/10x Sonnet’s 4.6 score. Still solid.

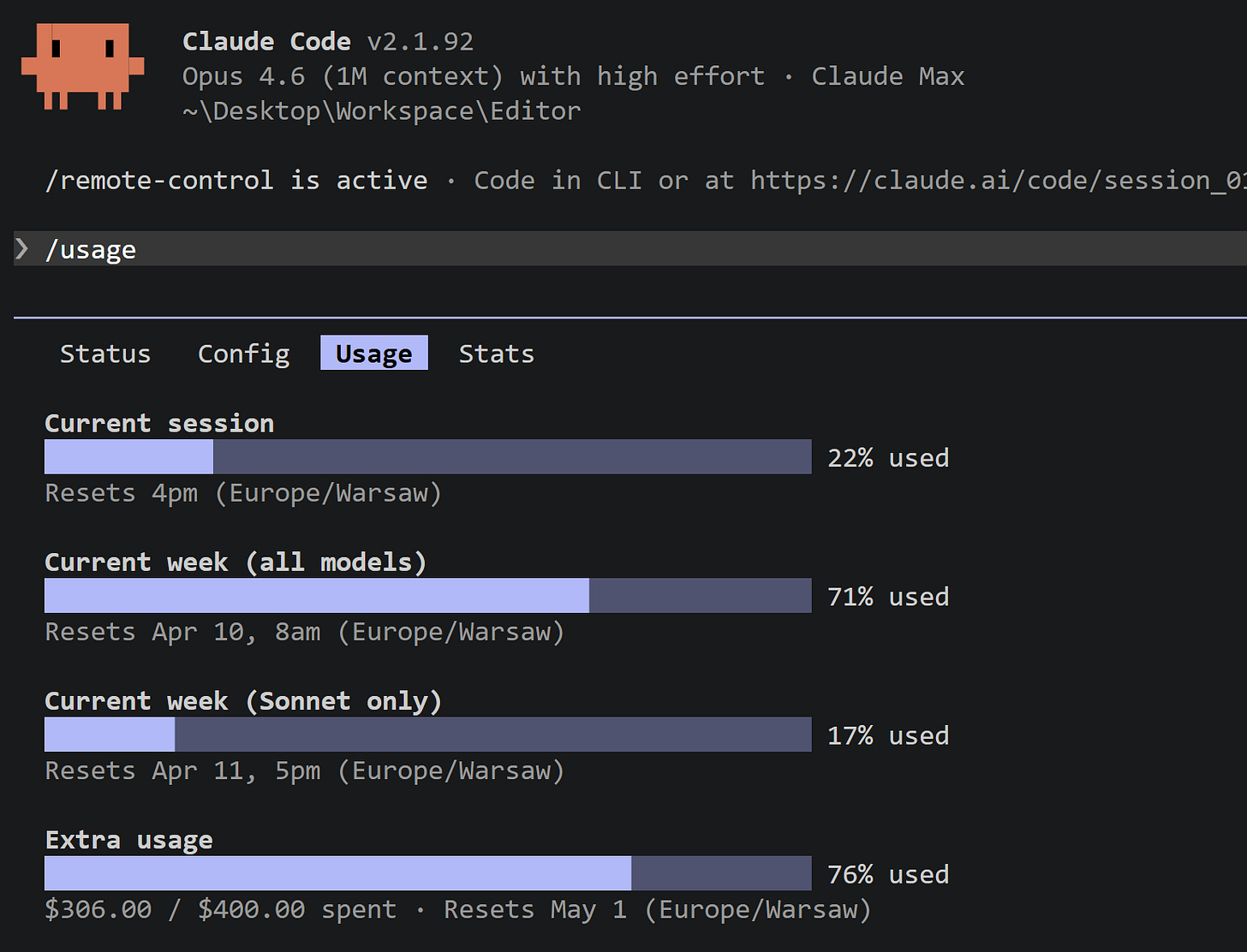

One Thing Missing: Token Visibility

The subscription model has one real frustration: you can't see where your tokens go. /usage exists but doesn't break down by model, project, or session — so when you hit limits unexpectedly, it's not obvious why.

Anthropic knows: there are open feature requests for per-prompt token breakdown and a real-time usage indicator. I built an open source solution — Section 4 covers it.

3. OpenRouter Setup for Claude Code

Claude Code isn’t locked to Anthropic’s models. Switch ANTHROPIC_BASE_URL and it routes to any compatible API. Add a .claude/settings.local.json to your project root, for example:

{

"env": {

"ANTHROPIC_BASE_URL": "https://openrouter.ai/api",

"ANTHROPIC_AUTH_TOKEN": "sk-or-v1-{your-OpenRouter-API-key}",

"ANTHROPIC_API_KEY": ""

},

"model": "z-ai/glm-5.1"

}Why OpenRouter over direct provider APIs?

One key, one endpoint, 400+ models. Switch between GLM-5, Qwen, Gemini, and GPT-5 by changing one variable—no juggling multiple API keys or base URLs.

Switching the API works for Claude Code CLI, but not for Claude Code Extension. In practice, this doesn’t make a huge difference, as you can still work with VS Code interface.

Step-by-step demo:

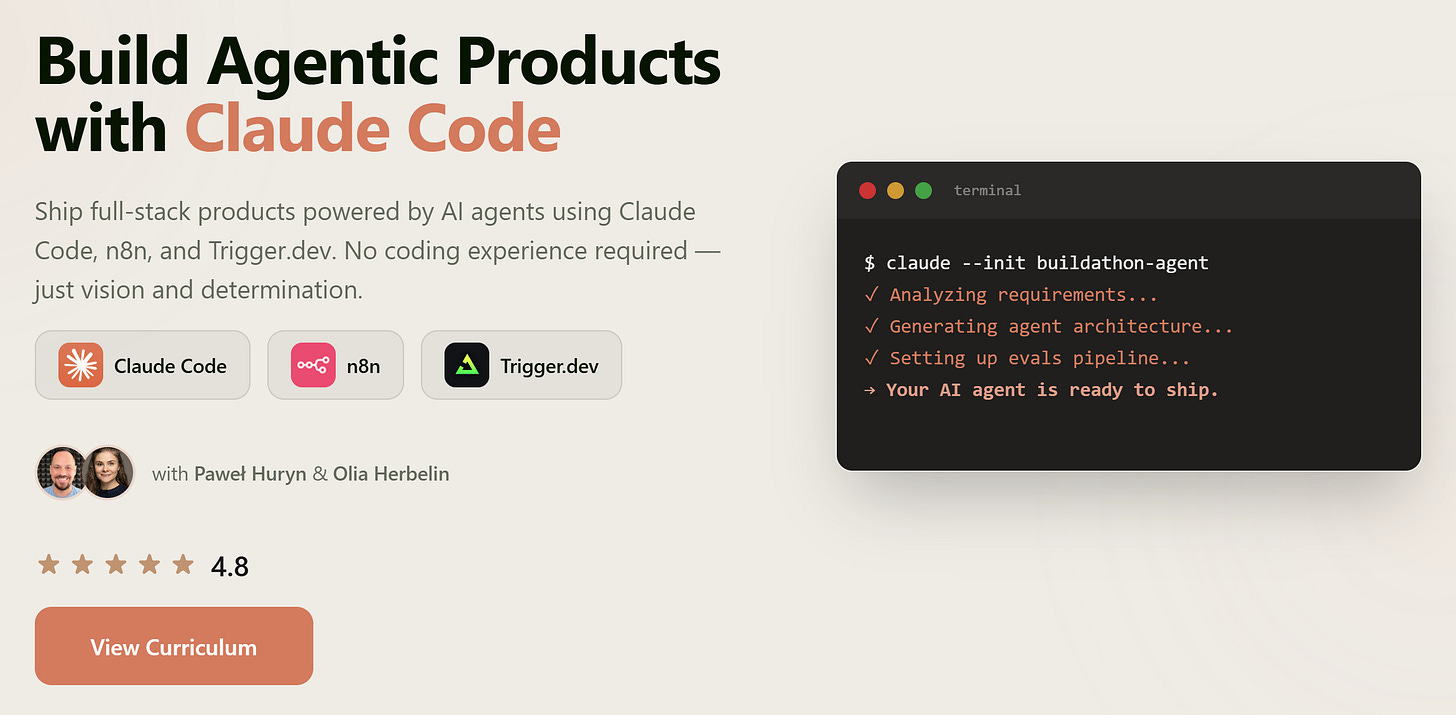

Side Note: Want to build with Claude? Join our AI Agents Claudathon — in 4 weeks you will learn everything to ship real agentic products with Claude Code. No experience required:

P.S. This is not an external cohort. Just me and Olia Herbelin.

4. Claude Code Usage Dashboard: See Where Your Tokens Go for Free

I mentioned the visibility gap in Section 2 — /usage doesn’t break down by model, project, or session. You hit limits and have no idea why.

That frustrated me enough to build something.

I open-sourced a local dashboard that reads your Claude Code transcripts and shows you exactly where tokens go. No cloud, no telemetry — everything stays on your machine:

You can star it, fork it, and make it your own. GitHub (open source, MIT):

What It Shows

Cost per day, broken down by model (Opus vs Sonnet vs Haiku)

Sessions and turns over time

Filter by model, filter by time range (7d / 30d / 90d)

Top projects by tokens — see which codebase is eating your budget

Auto-refreshes every 30 seconds at localhost:8080

My 30-Day Numbers

440 sessions. 18,000 turns. $1,588 in estimated API costs.

All covered by the Max subscription. For a fraction of the price.

First thing the dashboard caught: one day spiked to 700 million cached tokens. Not my usage — an Anthropic bug. Without the dashboard, I’d never have noticed.

Setup & Run on Windows

git clone https://github.com/phuryn/claude-usage

cd claude-usage

python cli.py dashboardSetup & Run on MacOS, Linux

git clone https://github.com/phuryn/claude-usage

cd claude-usage

python3 cli.py dashboardWhat It Measures

Claude Code Usage Dashboard works with any Claude subscription (Pro, Max, Team, Enterprise) running Claude Code — CLI, VS Code extension, and dispatched code sessions.

At the same time, Cowork and web code sessions are not included. We need to wait for Anthropic for a better solution.

5. Token-Saving Techniques (Works Everywhere)

Before switching models or tools, optimize what you’re already doing. These apply whether you’re on subscription or API.

Use /compact Aggressively

Context accumulates fast. Every file read, tool call, and response adds tokens. A 30-minute session easily hits 200K tokens, and you’re paying for all of it on every subsequent message.

Run /compact after major milestones. It preserves intent and drops noise.

Don’t Use Opus for Everything

Opus 4 costs 5x more than Sonnet ($15/$75 vs $3/$15 per M tokens). For most coding tasks, Sonnet is equivalent—and GLM-5.1 matches Opus on agentic tasks at 1/12th the cost.

If you struggle with available tokens, use Opus only for:

Complex architectural reasoning when Sonnet keeps failing

Long-context analysis where every detail matters

Tasks with extreme ambiguity

Default to Sonnet or GLM. Escalate to Opus when the model fails.

Watch for MCP Tool Overhead

MCP tools can easily add ~20K tokens to every session just from tool definitions.

While by default they are loaded on demand in Opus and Sonnet, this may not work with custom LLMs. Remove MCP servers you don’t need by typing /mcp in Claude Code CLI.

Hint: This is where you can adjust default settings for Opus and Sonnet:

{

“env”: {

“ENABLE_TOOL_SEARCH”: “true”

}

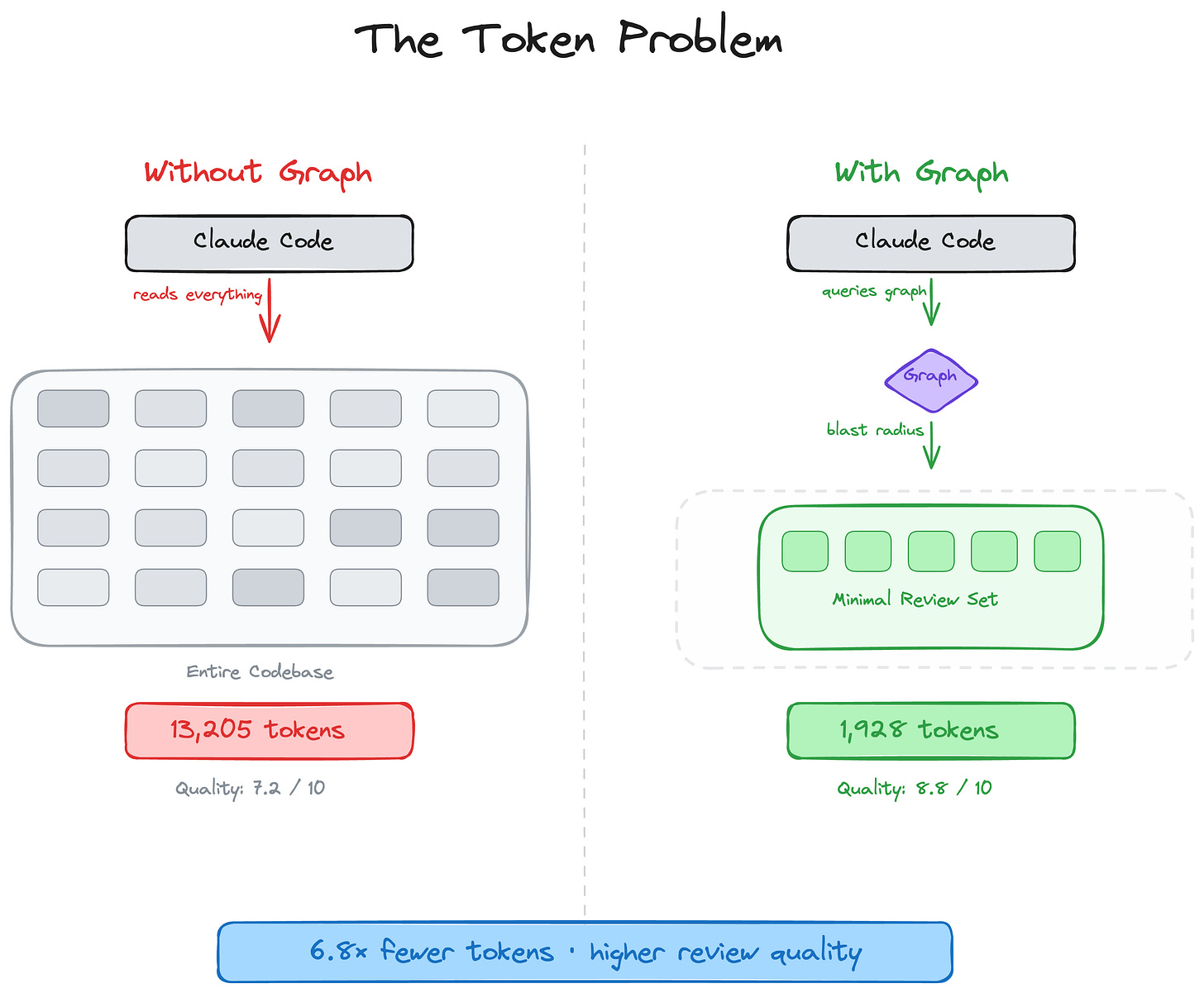

}Code Graph MCP: Feed Claude Only What It Needs

Every time Claude Code reviews a change, it searches your codebase with grep and glob — smart, but not structural. It doesn’t know which functions call what.

code-review-graph adds that structural layer. It parses your codebase into an AST, stores it in SQLite, and tracks changes via git hooks. At review time, it traces the blast radius of your change and feeds Claude only the affected files.

The author’s benchmarks: 6.8x fewer tokens on code reviews, up to 49x on daily coding. On a 27,700-file monorepo, only 15 files were read.

I haven’t tested these numbers myself, I currently don’t work with such large codebases, but the architecture makes sense — especially for large codebases where the savings compound.

Agent-Browser: Browse Without Screenshots

If your agents need browser access, don’t use Chrome MCP with screenshots — each screenshot eats thousands of tokens for a single page view.

agent-browser by Vercel Labs takes a different approach: instead of screenshots, it returns an accessibility tree snapshot with element references (@e1, @e2, etc.). Agents interact via those refs — compact text, not images. The result: 82% fewer tokens than Playwright MCP, up to 93% reduction overall.

It’s also much more reliable than the standard web fetch:

No configuration needed. Works with Claude Code (tell it to use it in CLAUDE.md) and any MCP-compatible agent. Installation:

npm install -g agent-browser8. Closing

Subscribe for daily coding — stop watching the meter. Route automation to GLM-5.1 for Opus-level agentic performance at 1/12th the cost. Track every token with an open-source dashboard so you know exactly what you’re getting.

Anthropic’s April policy change forced automation users onto API billing. But it also clarified the landscape: subscriptions for Anthropic tools, APIs for everything else.

The one thing still missing: real token visibility from Anthropic. Until they ship it, the dashboard fills the gap. Clone it, see where your tokens go, and stop guessing:

Thanks for Reading The Product Compass

It’s amazing to learn and grow together.

Next: prototyping for PMs (3 tools & templates). This will be a paid content.

Have a great week ahead,

Paweł

The token visibility gap is the real issue. You can't optimize what you can't measure. Most teams switch to API mode the moment they start caring about cost — not because the subscription is bad value, but because the opacity makes budgeting impossible at scale. The teams that stay on subscription are the ones treating it like a tool, not infrastructure.

I’ve been playing around with your dashboard - think it is valuable to add cache read and write costs? For a more detailed insight?