OpenAI’s Product Leader Shares 5 Phases To Build, Deploy, And Scale Your AI Product Strategy From Scratch

The most practical guide you’ll read on AI product strategy. This will teach you how to build an AI moat that compounds and how to lead AI initiatives with confidence and clarity.

Hey, Paweł here. Welcome to the free archived edition of The Product Compass!

Every week, I share actionable insights and resources for AI PMs.

Here’s what you might have recently missed:

I Copied the Multi-Agent Research System by Anthropic. No Coding!

AI Agent Architectures: The Ultimate Guide With n8n Examples

Consider joining the community of 122K+ and upgrading your account for the full experience:

Recently, with Miqdad Jaffer, OpenAI’s Product Leader, we dove into everything you need to know about AI product strategy.

Today, we’re diving deeper into how to build, deploy, and scale your own successful AI product strategy and lead AI initiatives step-by-step.

But before we do that, let’s discuss why mastering AI product strategy should be the first thing on your mind.

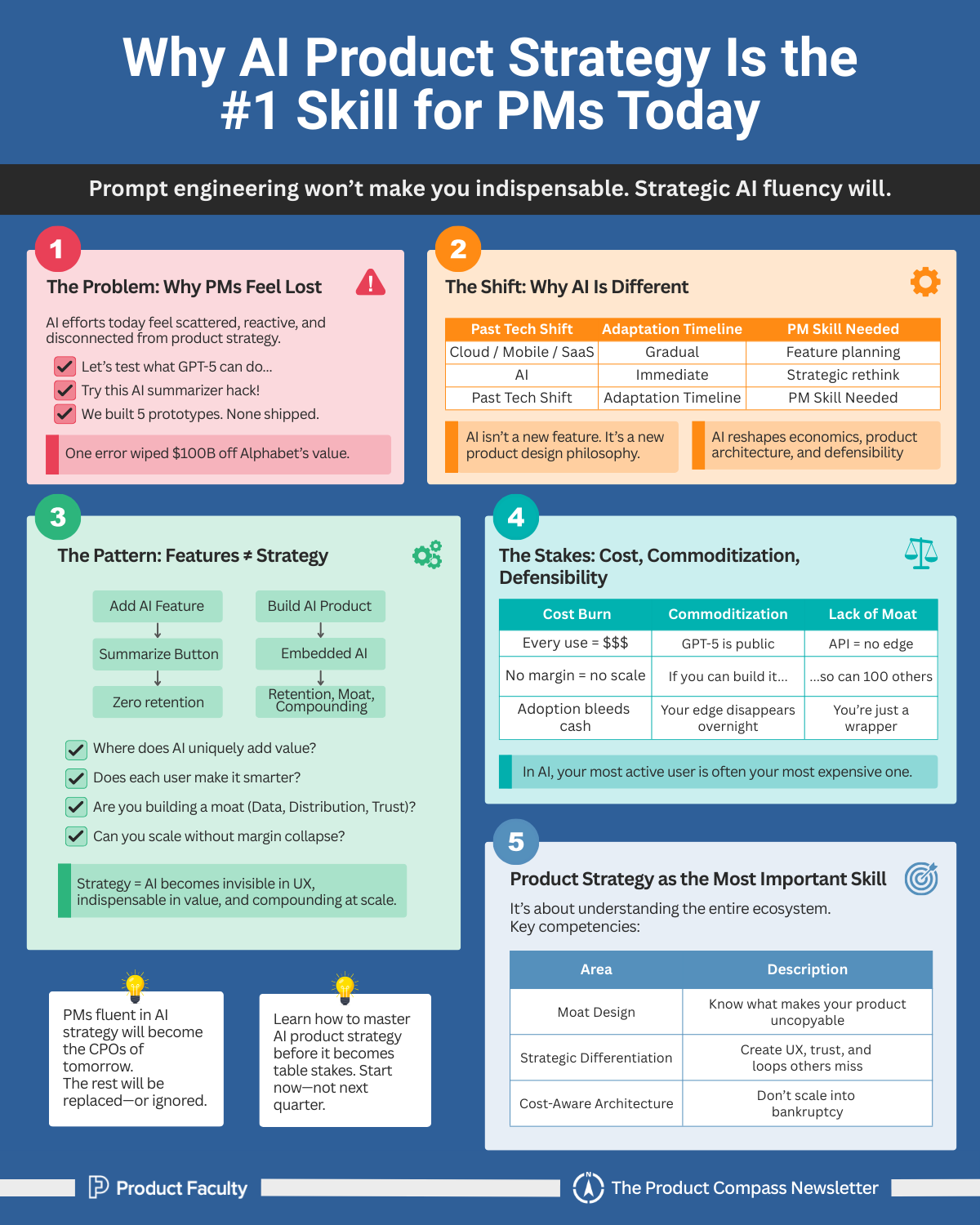

MIT just revealed that most of organizations are getting ZERO return from Generative AI despite pouring billions into it.

One hallucination in Google Bard wiped out $100B of Alphabet’s market cap.

And right now, you’re probably either thinking about leading AI initiatives or already running some…

But if you’re honest with yourself, deep down, you know it feels scattered and all over the place.

(It’s all okay, we’ve all been there 🙂)

Because let’s face it: the Slack channels are full of prompt experiments, prototypes are half-built, and every week there’s another “AI hack” that doesn’t connect to any real strategy.

This is because over the past two decades, product management has absorbed new waves of technology: mobile, cloud, SaaS. But each of those was ultimately a platform shift you could adapt to slowly. AI is different. It isn’t just a new platform; it’s a new economics, a new product design philosophy, and a new kind of defensibility.

The PMs who understand how to build and scale AI products strategically will become the CPOs of tomorrow and eventually lead their companies toward sustainable success. The ones who don’t will struggle to stay relevant in organizations that expect AI fluency as table stakes.

And remember, AI product strategy isn’t about “knowing what ChatGPT can do,” or spinning up prototypes in an afternoon. Anyone can do that.

It’s about knowing where AI fits in your product, how it changes your unit economics, how to build feedback loops that compound value, and how to defend against commoditization. It’s the difference between being a PM who “adds AI” to a backlog and being a PM who sets the company’s direction in an AI-first market.

But here’s the common pattern I’ve seen most people get completely wrong.

Side Note: If you want to shorten your learning curve and master & implement the most defensible AI product strategy in a 6-week cohort with OpenAI’s Product Leader, now is the time to do it.

Right now, you’re getting $550 off and a written review of your AI product strategy (worth $10,000, free for the first 100 only, they already have over 50 students, including leaders from UberAI and Rippling).

Go here:

Adding AI Features vs. Building an AI-Powered Product

Too many teams confuse features with strategy. Slapping a “summarize” button or “AI assistant” into your product is not a strategy, it’s a novelty.

Users will try it, maybe even like it, but without defensibility or workflow integration it won’t retain, it won’t scale, and it won’t differentiate you from the hundreds of other tools doing the same thing.

Building an AI-powered product, by contrast, means designing from first principles:

Where does AI uniquely add value?

How do we architect the product so every new user makes it smarter, not just more expensive?

What moat are we building (data, distribution, or trust) that competitors can’t replicate?

How do we scale adoption without bleeding margins on inference costs?

In a nutshell, it’s ALL about rethinking the product so deeply that AI becomes its engine… invisible in the workflow, indispensable to the user, and compounding in value as you grow.

But why do you need to act right now… not a week from now, not a quarter from now… but right now?

The Stakes: Costs, Commoditization, and Defensibility

The stakes could not be higher. AI products operate under a completely different set of rules:

Costs don’t disappear with scale. Every user interaction burns compute, meaning your most engaged users are often your most expensive.

Commoditization happens overnight. Everyone has access to GPT-5 tomorrow, just like you do. If your only edge is calling an API, you have no edge.

Defensibility is everything. Without a moat, proprietary data, trusted governance, or instant distribution, you’re just another wrapper waiting to be replaced.

This is why AI product strategy is the most important skill for PMs right now. Again, it’s not just about writing clever prompts. It’s about understanding the full ecosystem: from moat to differentiation, from design to deployment, from experimentation to organizational leadership.

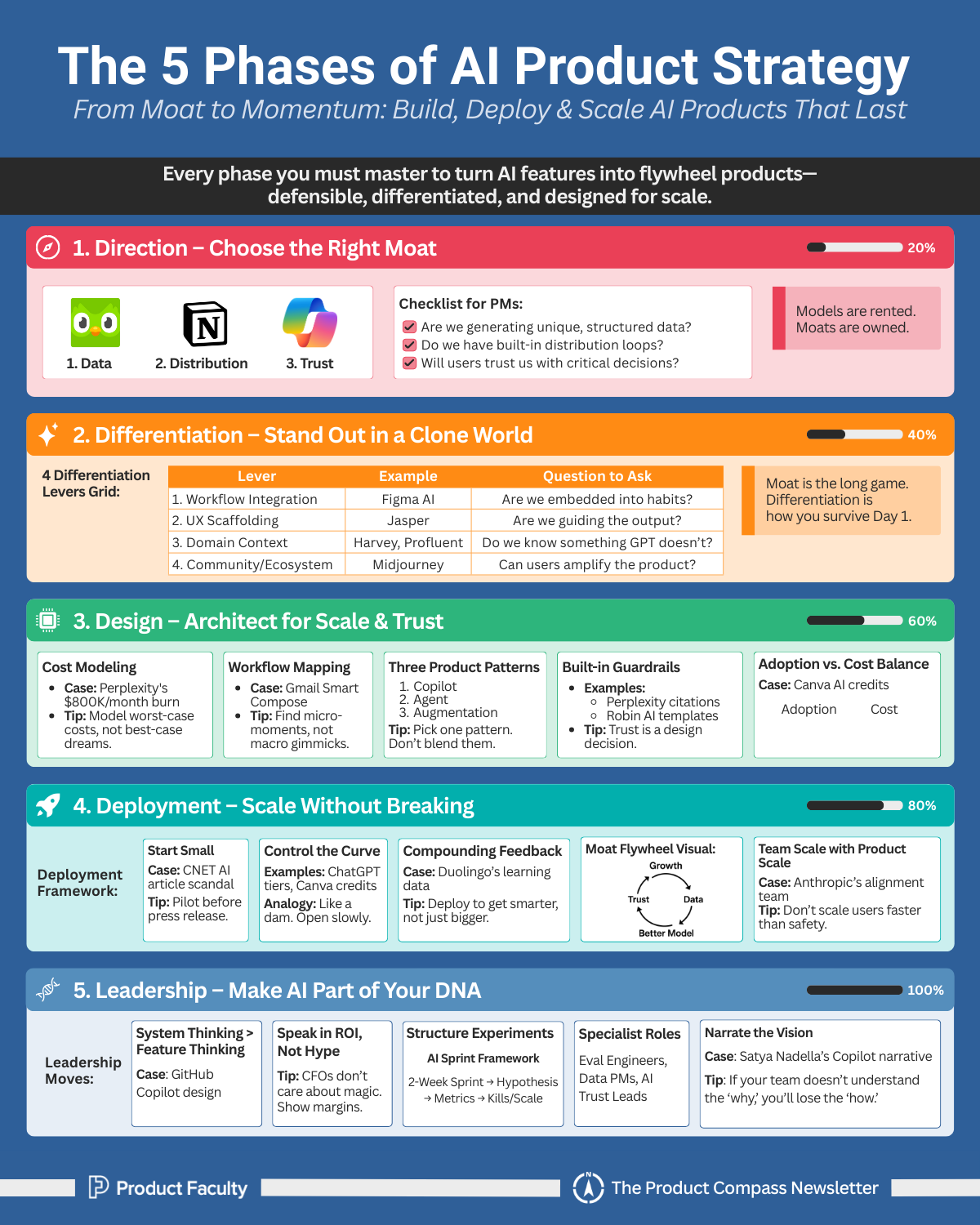

5 Phases of Building, Deploying, and Scaling Your AI Product Strategy

Here’s what we’re going to cover today:

Phase 1: Direction - Choosing the Right Moat

Phase 2: Differentiation - Standing Out in a World of Commoditized Models

Phase 3: Design - Building the Product Architecture

Phase 4: Deployment - Scaling Without Breaking Costs

Phase 5: Leadership - Embedding AI Into the Org

Bonus: How to Run AI Experiments That Don’t Waste Time

Let’s dive into everything.

Keep reading with a 7-day free trial

Subscribe to The Product Compass to keep reading this post and get 7 days of free access to the full post archives.