The Ultimate Guide to Claude Opus 4.7

What changed, the 10 migration moves, and 10 highest-ROI levers to keep costs down.

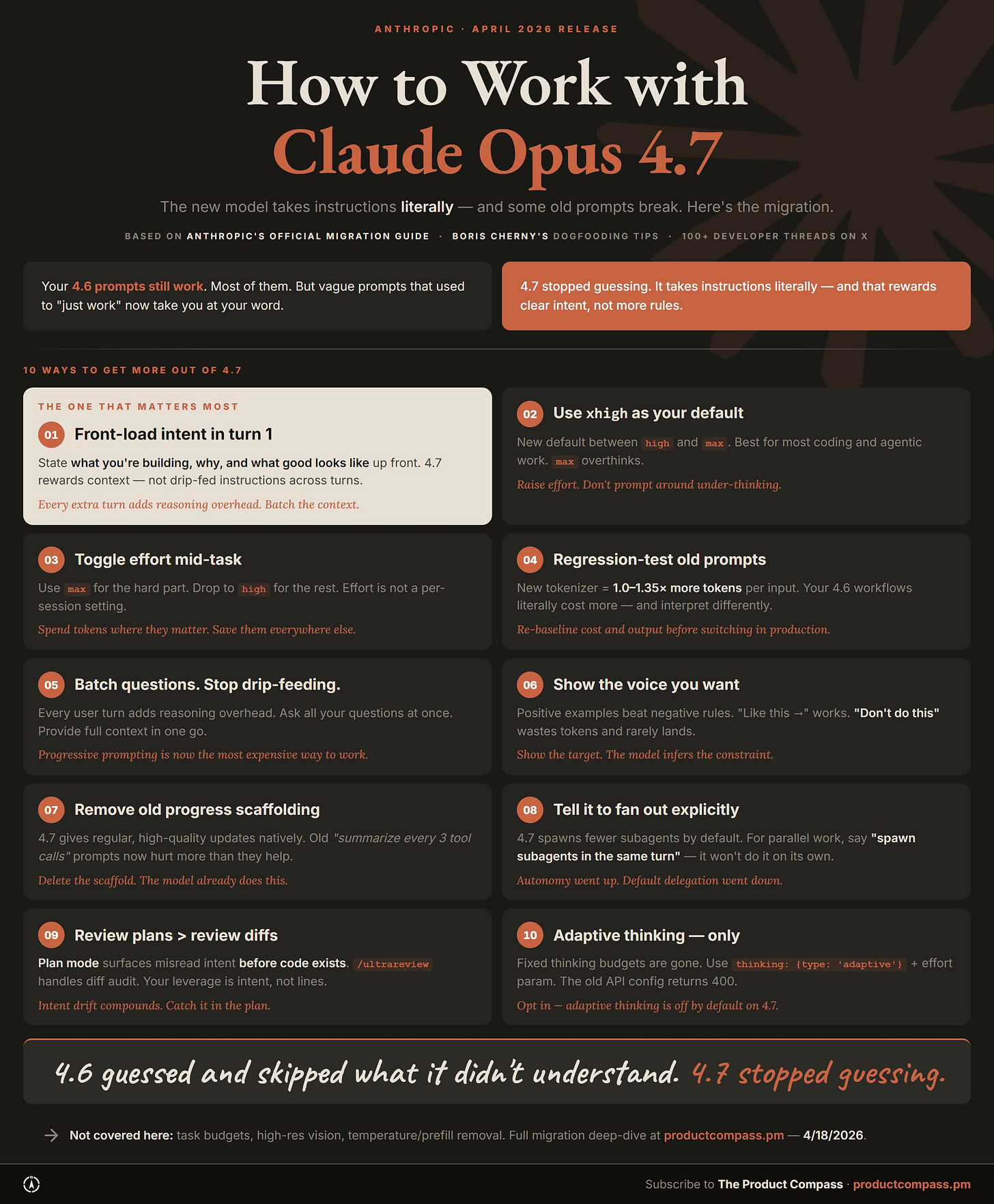

4.6 guessed. 4.7 stopped guessing. Your old prompts still work, mostly. The ones that break need one of the ten moves below.

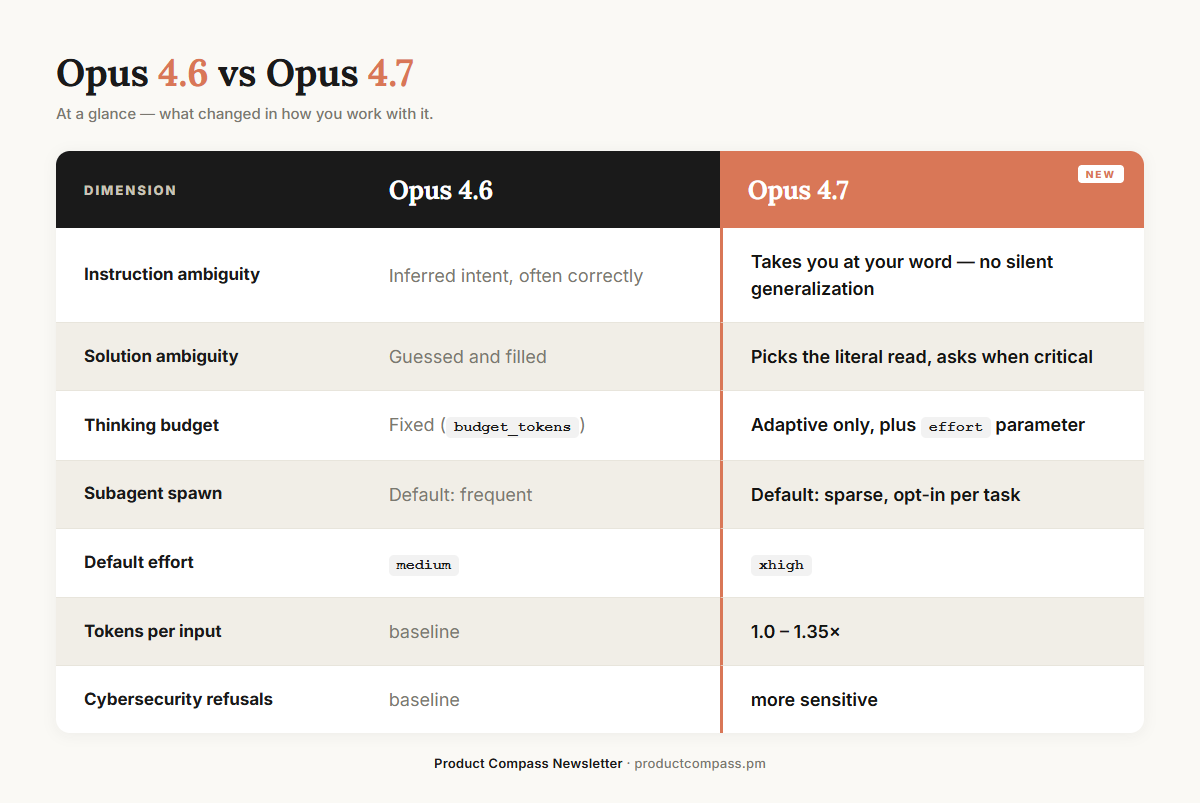

Anthropic shipped Claude Opus 4.7 on April 16. The official migration guide puts it plainly: 4.7 “takes the instructions literally” and “will not silently generalize.”

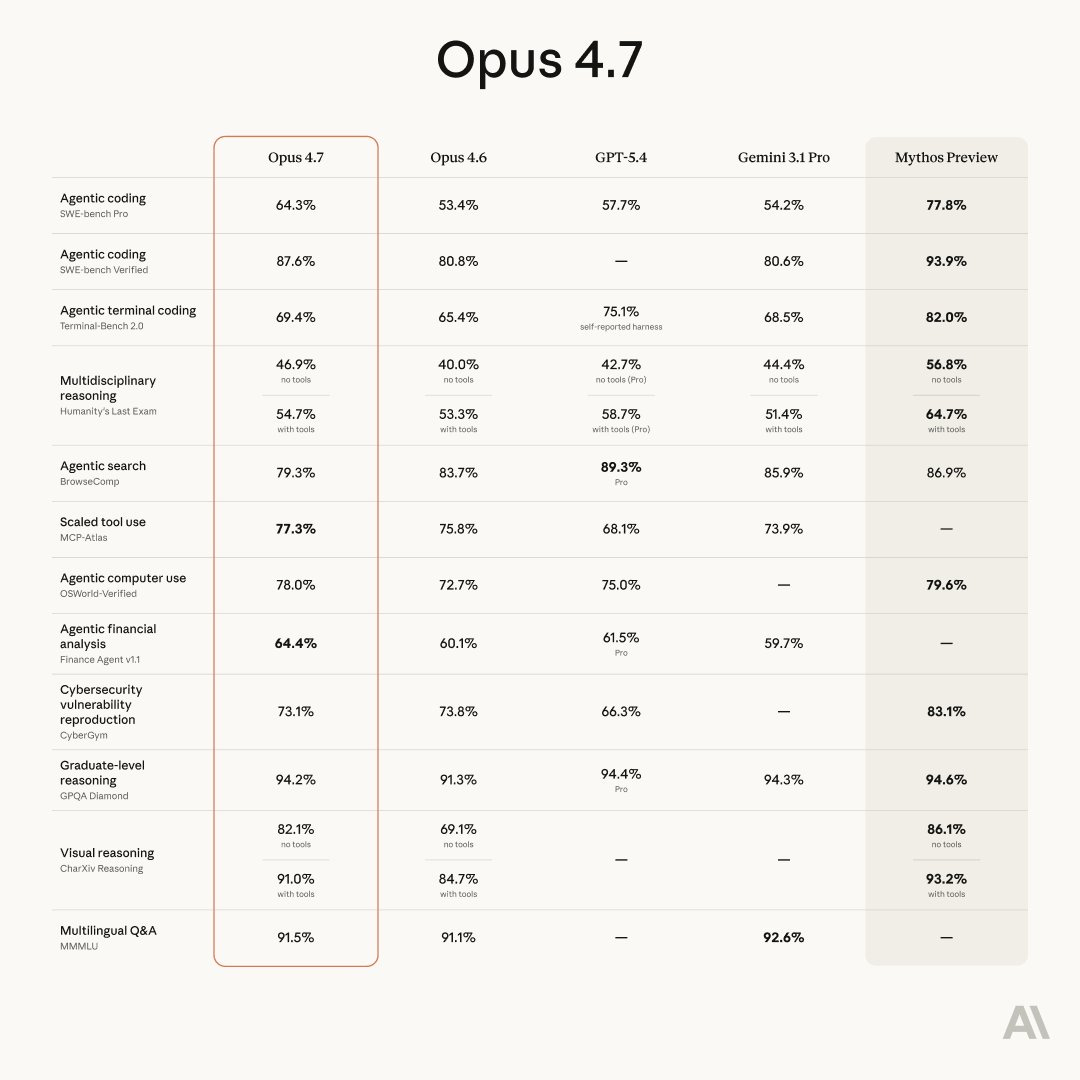

It's not a uniform upgrade. Wins on coding, creative writing, and structured work. Losses on instruction following in vague prompts, multi-turn, and long-context retrieval. The benchmarks show a trade, not a regression. I sort real regressions from preference artifacts and cost mechanics in § 6.

Boris Cherny, Claude Code lead at Anthropic, posted on release day: "It took a few days for me to learn how to work with it effectively."

Why Read This, and Why Now

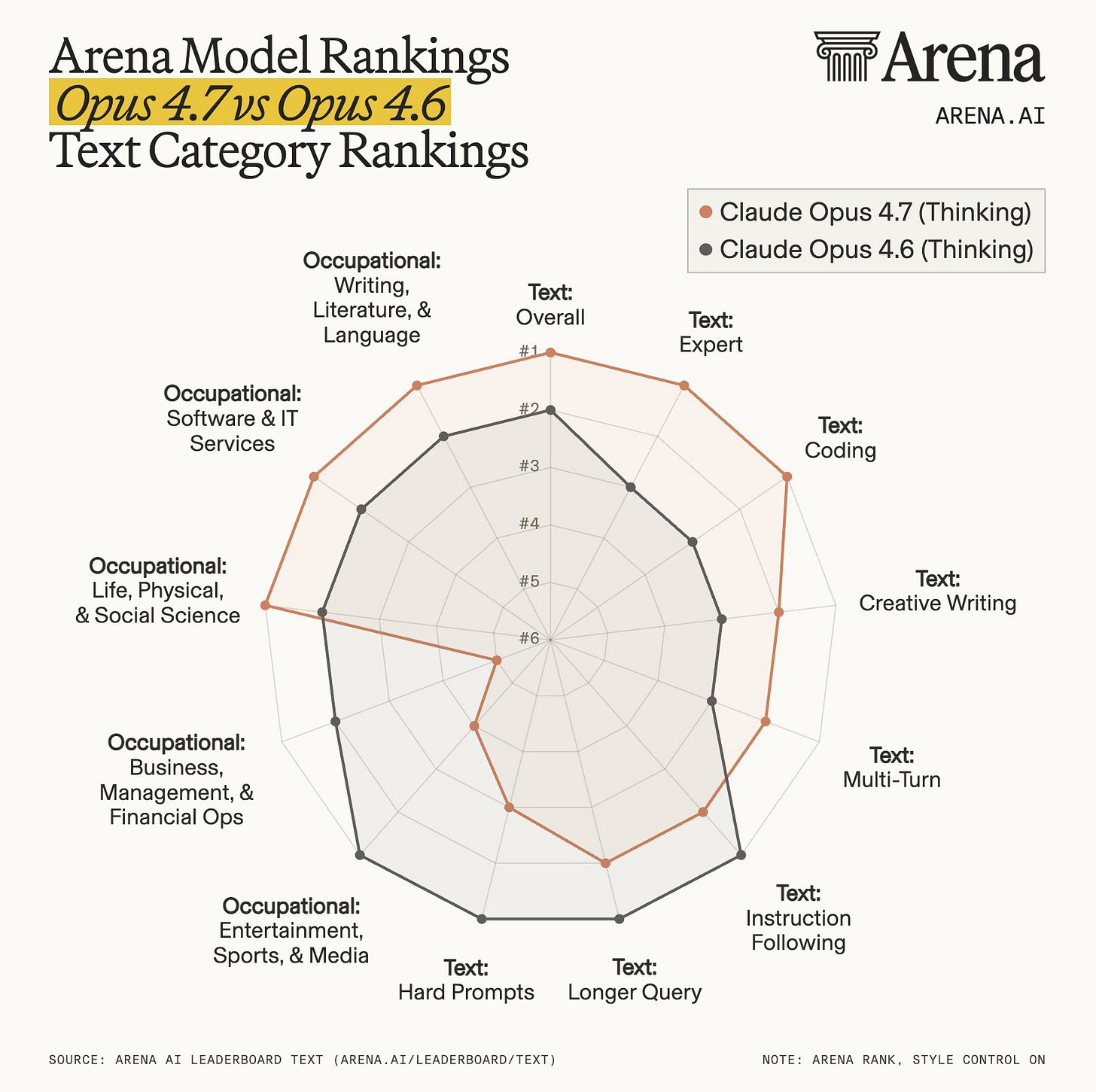

Reddit calls 4.7 a regression. Arena shows 4.6 winning on instruction following. Anthropic's migration guide says it's working as designed. Boris confirms it's more agentic and precise.

The takes don’t agree because they’re measuring different workflows.

By the end you’ll know:

The ten moves to make your 4.6 prompts work on 4.7

Where 4.7’s cost really goes, and the ten highest-ROI levers

What Cowork and Dispatch users lose vs the Code, and what I use on mobile instead

How to decide between 4.6 and 4.7 for your own workflow

You don’t need more instructions. You need better intent.

I’ve had 16+ hours with 4.7 so far. What follows is what changed, what to do about it, and resources section at the end.

1. What’s New in Opus 4.7

4.6 filled the gaps when your instruction was unclear. 4.7 takes you at your word.

For PMs: if your 4.6 workflow relied on the model “figuring out what you meant,” expect 4.7 to ask more questions, or to do less, or to do exactly what you asked for (which is not what you wanted).

2. Intent: The Universal Unlock

This is the principle: 4.7 rewards clear intent. Everything else in this post is a tactic.

Not longer prompts, not more rules, not a bigger CLAUDE.md. Intent splits into two layers:

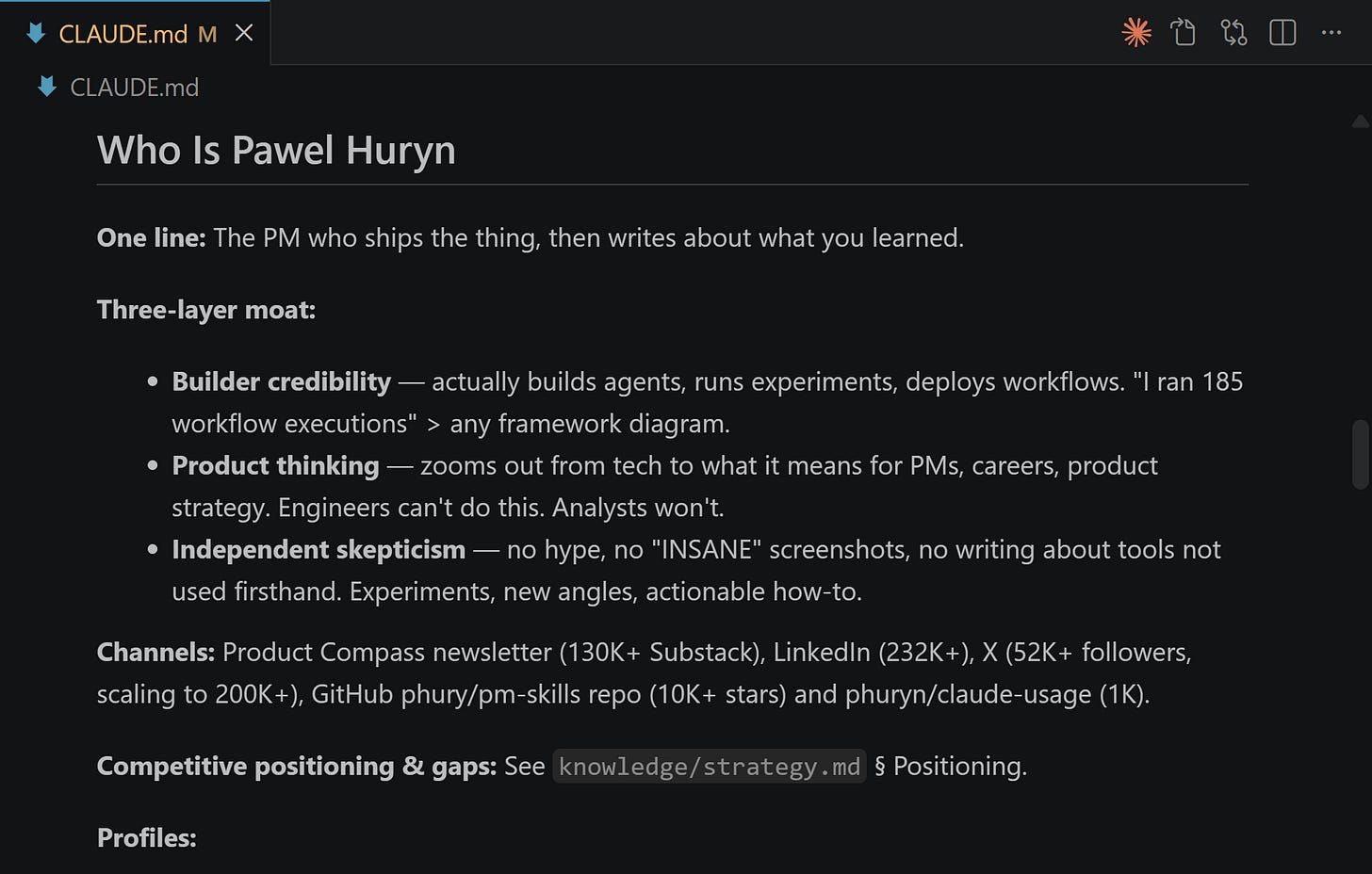

Strategic context is durable: what you’re building, who it’s for, what’s off-limits, what good looks like. Write it once. Put it in CLAUDE.md. It loads every session, progressive-disclosure style, so you’re not paying to reintroduce the project on turn one.

Per-task intent is variable: what specifically do I want Claude to do right now. You still write this every turn. The gain from CLAUDE.md is that you stop retyping the strategic context on top of it.

The full version (seven components, how they compose) is in The Intent Engineering Framework for AI Agents, published three months before 4.7 shipped:

This is perfectly aligned with Karpathy’s Claude coding post, too:

“Leverage. LLMs are exceptionally good at looping until they meet specific goals and this is where most of the “feel the AGI” magic is to be found. Don’t tell it what to do, give it success criteria and watch it go (...) Change your approach from imperative to declarative to get the agents looping longer and gain leverage.”

Anthropic and OpenAI convergence

Anthropic moved Opus 4.7 toward more literal instruction following. OpenAI updated their December 2025 Model Spec to say “consider not just the literal wording but the underlying intent.”

They’re converging from opposite directions. Anthropic is adding precision to its intent-first model. OpenAI is adding intent inference to its precision-first model. The same skill (engineering intent clearly) is becoming the unlock on both sides.

And Anthropic is already encoding it

Managed Agents (research preview) bakes success criteria and outcomes into the framework itself. I recently covered it here:

The skill of engineering clear intent is what transfers across vendors and models. That’s why it has the longest shelf life right now.

Side Note: On May 9, we’re launching Hands-On Claude Code Certification. In 4 weeks you will learn everything to ship full agentic products — UI, agentic harness, evals, guardrails, and ops — with Claude Code. Real apps, not demos.

Most students expense this through their companies.

3. The 10 Claude Opus 4.7 Migration Moves

3.1 Front-load intent in CLAUDE.md

You don’t have to retype the strategic context every session. Put it in CLAUDE.md once. Every future session starts with the context already loaded. You still write per-task intent each turn, but you stop paying the “remember what we’re building” tax.

Delegate information the agent doesn’t have to read every session to other files, like strategy.md below:

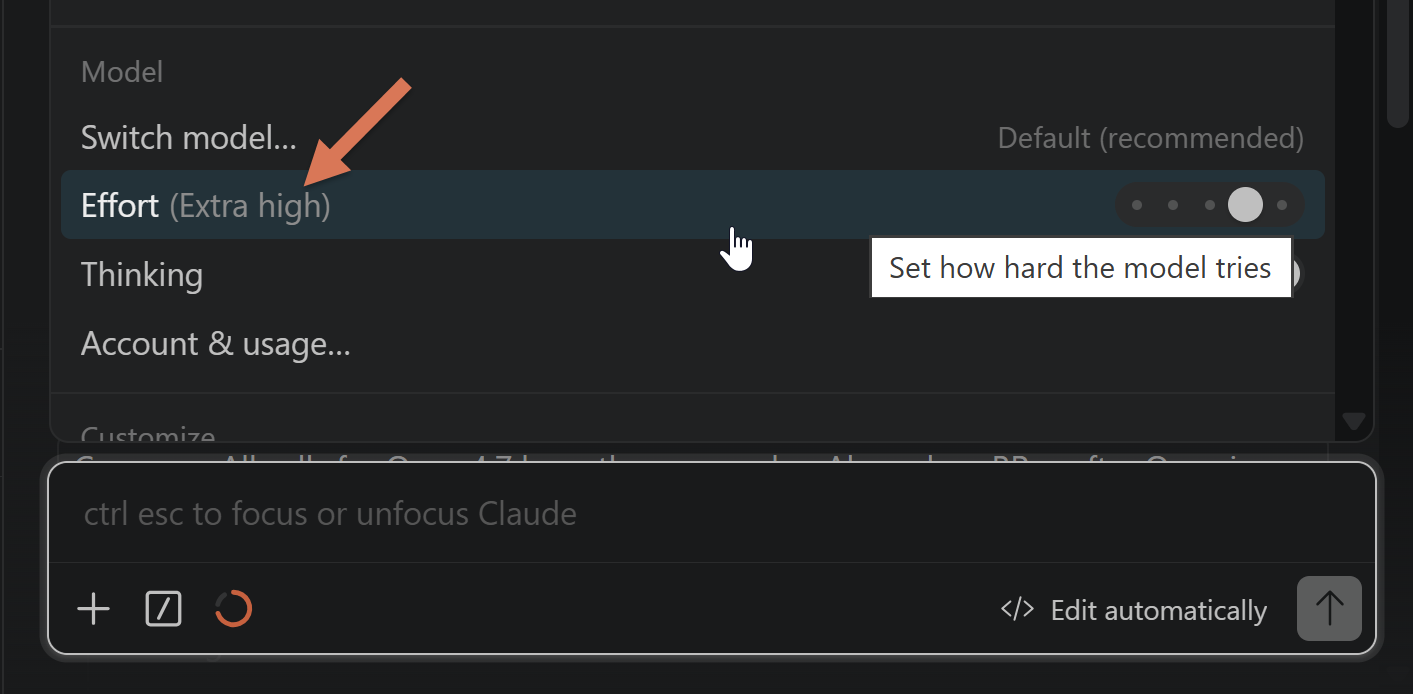

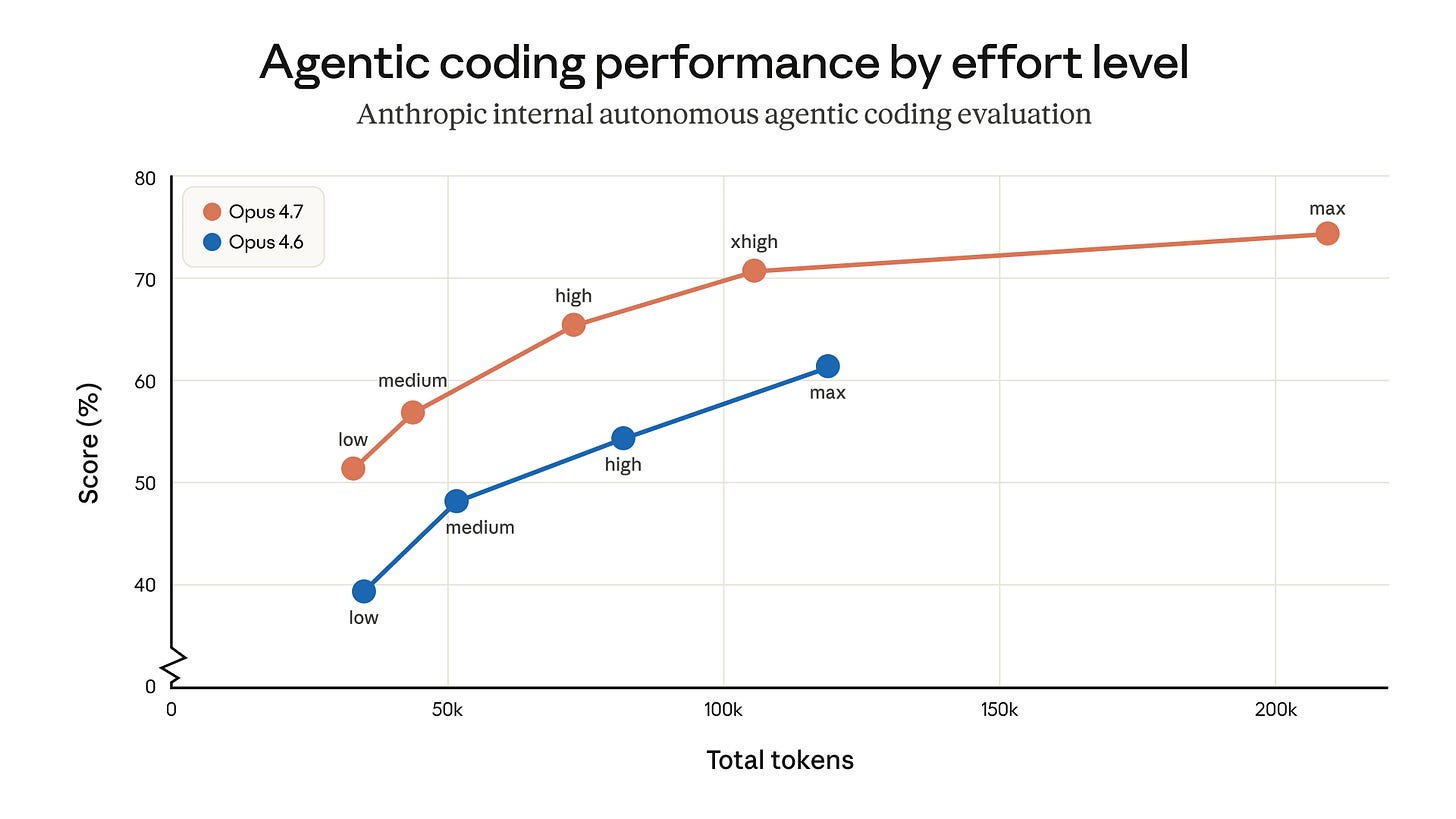

3.2 Default to Extra high (xhigh)

New effort level between high and max. Anthropic’s own recommendation for coding and agentic work.

max is prone to overthinking. Most “4.7 feels slow” reports trace back to people running max by reflex. Use max only when the problem actually needs deep reasoning.

3.3 Toggle effort mid-task

Effort is per-call, not per-session. max for the hard subproblem. Drop back to high for the rest.

3.4 Regression-test old prompts

New tokenizer. 1.0 to 1.35× more tokens per input. Your 4.6 workflows cost more on 4.7 before you’ve changed a line.

The offset to know about: Anthropic raised rate limits alongside the 4.7 launch:

My perspective: what matters most is cost per correct output token. Test it for your specific product before switching the model.

3.5 Batch questions. Stop drip-feeding.

If you have three questions, ask all three in one message.

On 4.6, clarifying across 3-4 turns worked. On 4.7, each turn adds reasoning overhead on top of literal interpretations from earlier turns.

Treat clarification as an exception, not a workflow.

3.6 Show what you want

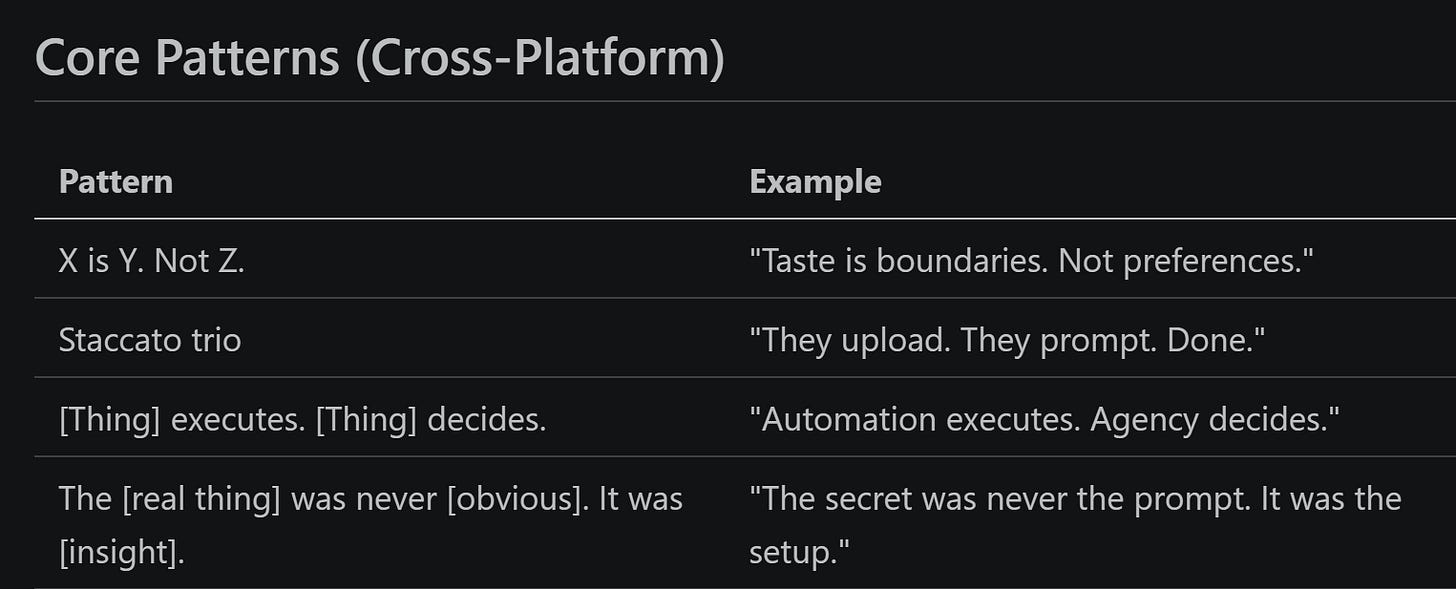

Positive examples beat negative rules. According to Anthropic:

“Like this: ” followed by short examples works.

“Don’t do this: ” rarely lands and burns tokens trying.

If your prompt has more than three “don’t” or “never” lines, flip them. What does the ideal output look like? Show two examples and cut the rules.

3.7 Delete old progress scaffolding

“Summarize every 3 tool calls.” “Give me a status update before moving on.” “Explain your plan, then execute.” Delete these.

4.7 emits high-quality progress updates natively in long agentic traces:

3.8 Tell it to fan out explicitly

4.7 spawns fewer subagents by default and makes fewer tool calls per task. For parallel exploration, you now have to ask.

Phrasings that work: “spawn subagents in the same turn to investigate X, Y, Z.” Autonomy went up. Default delegation went down.

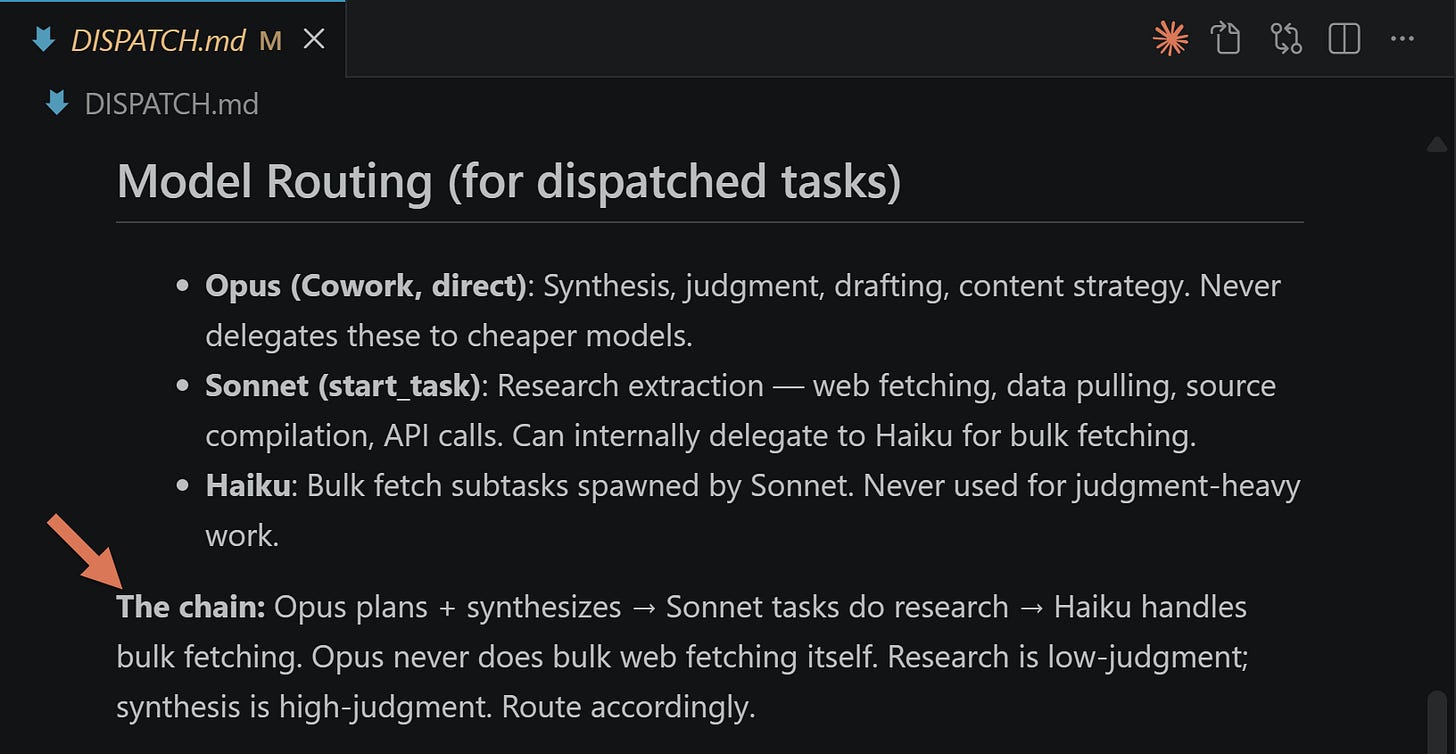

I also added separate instructions for Dispatch in DISPATCH.md. This file is referenced from CLAUDE.md:

Later, I explain why I started using Dispatch less often.

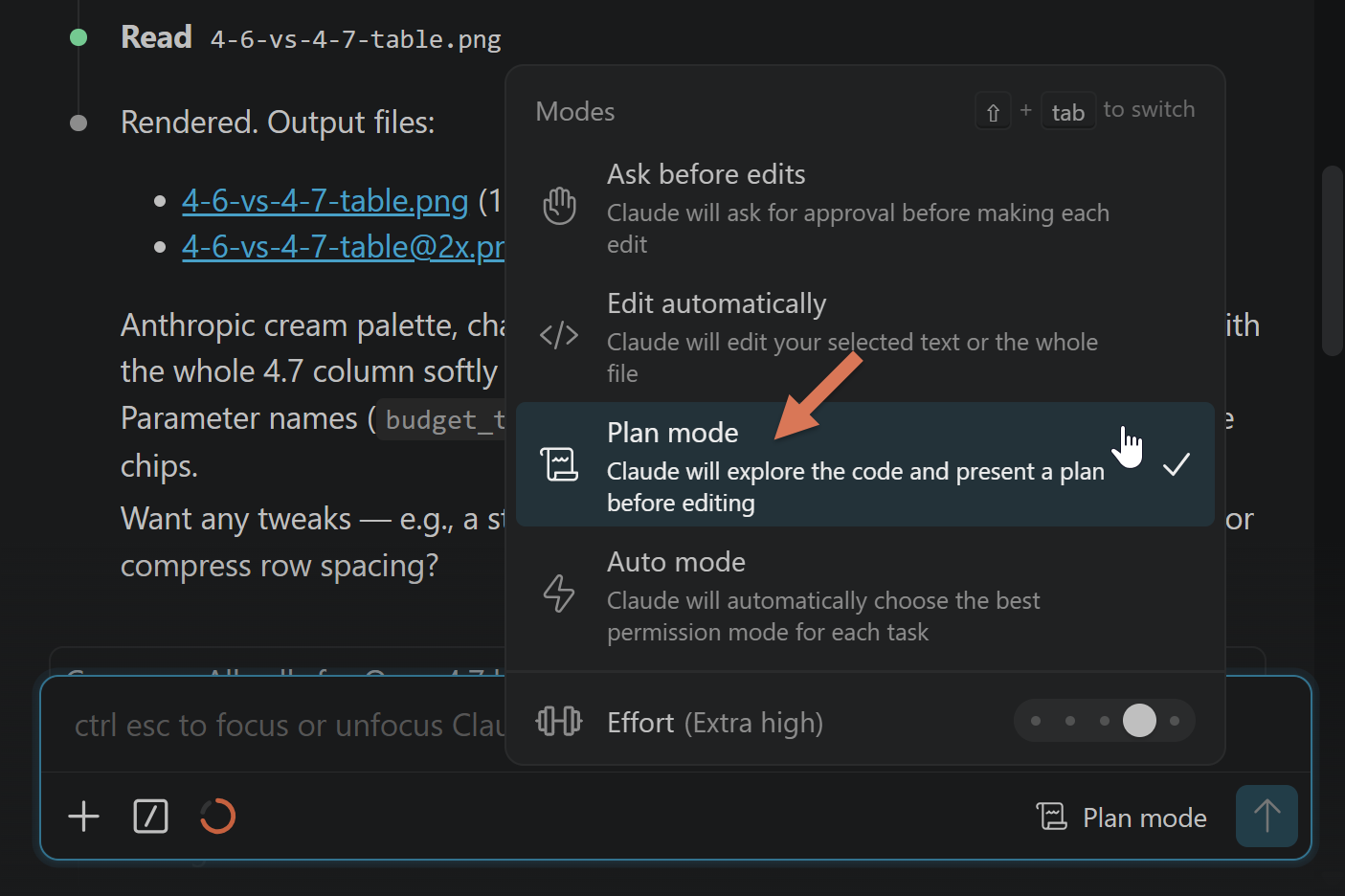

3.9 Review plans, not diffs

Two different primitives. Don’t confuse them.

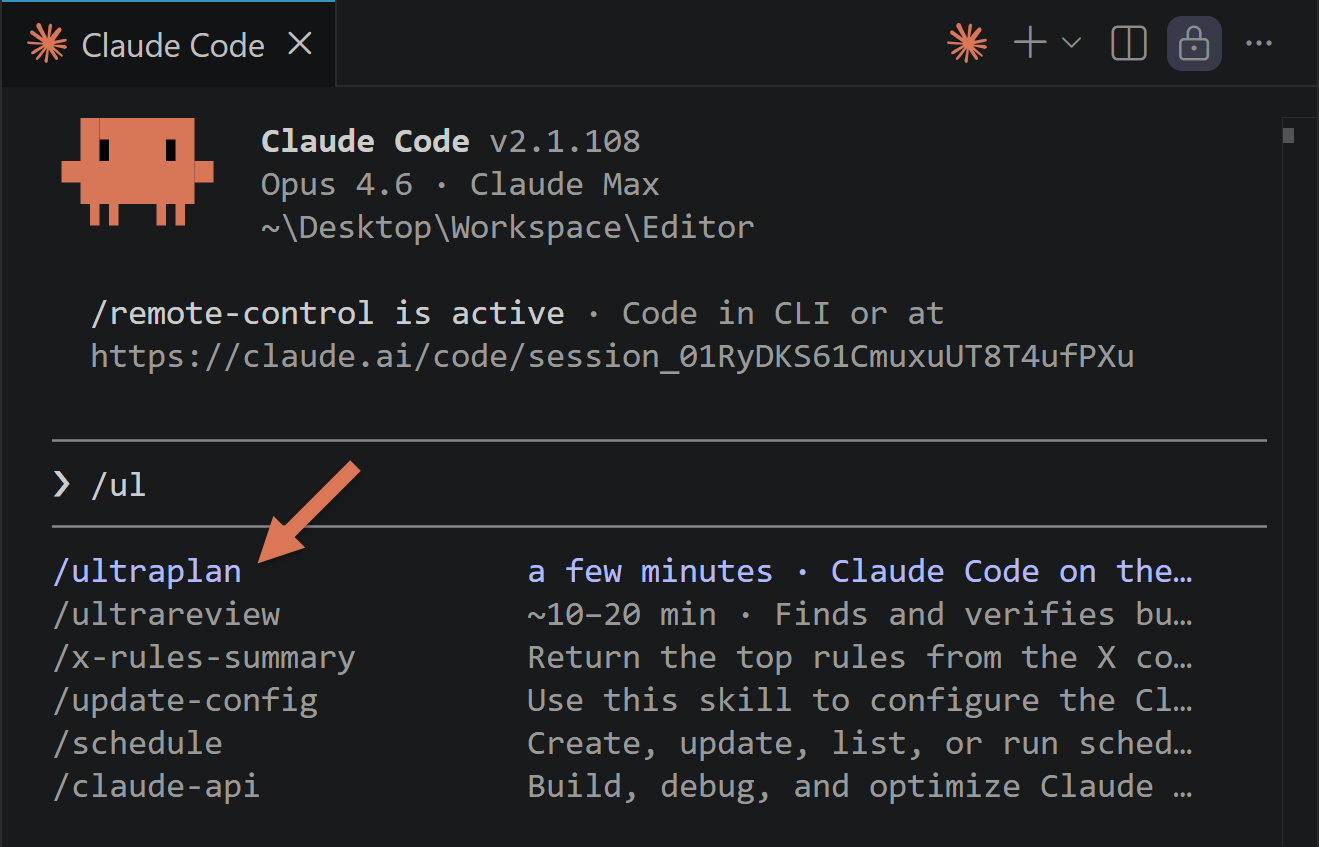

Plan mode (Shift+Tab twice in the Claude Code CLI): inline, surfaces the plan before any code exists in the current session. Use for any change that touches more than one file:

/ultraplan (CLI only, doesn’t work in VS Code extension): cloud-based plan drafting from the CLI, review in the browser. Plan runs in a remote session while your terminal stays free:

Why this matters more on 4.7: because 4.7 takes intent literally, a small misread in the plan becomes a large misread in the diff. Reviewing a 10-line plan for intent drift takes 30 seconds. Reviewing a 200-line diff for the same drift takes 15 minutes.

My take:

Intent drift compounds. Catch it in the plan.

3.10 Adaptive thinking only

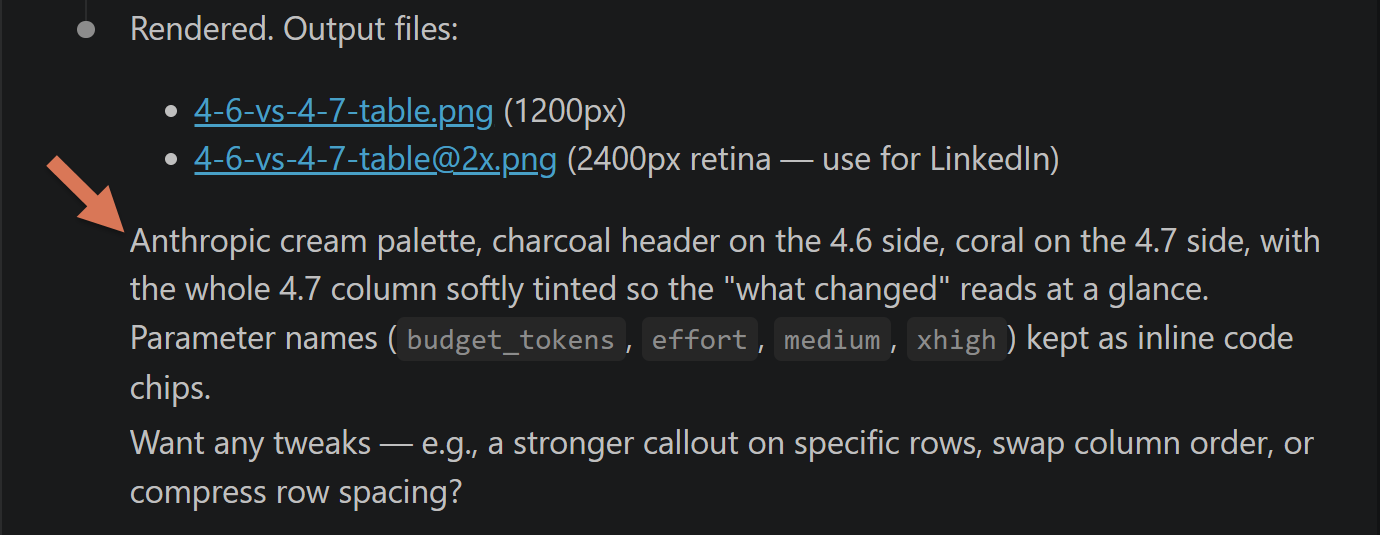

Fixed thinking budgets are gone. Use thinking: {type: 'adaptive'} plus the effort parameter. Old API calls with budget_tokens return HTTP 400. Find and replace before flipping the model flag:

client = anthropic.Anthropic()

response = client.messages.create(

model="claude-opus-4-7",

max_tokens=16000,

thinking={"type": "adaptive"},

messages=[

{

"role": "user",

"content": "Explain why the sum of two even numbers is always even.",

}

],

)If you’re not an engineer, you don’t need to memorize the syntax. Just give your agent this documentation: https://platform.claude.com/docs/en/build-with-claude/adaptive-thinking.md (remove “.md” for a human-friendly view).

Everything above is the mental model. Below, the execution: 10 highest-ROI moves for 4.7 that actually move the bill, my remote setup, and Cowork/Dispatch gotchas 👇

4. Cost Control on Opus 4.7

$20/month is enough to poke at Claude. It’s not enough to run agents. And 4.7 is more expensive than 4.6 by default. New tokenizer (1.0–1.35× tokens per input), adaptive thinking with generous budgets, high-res vision at 3× tokens.

Ten highest-ROI moves (can cut tokens significantly; in my setup, 60–80%):

Keep reading with a 7-day free trial

Subscribe to The Product Compass to keep reading this post and get 7 days of free access to the full post archives.